A group of academics demonstrate a new attack that leverages the Text-to-SQL model to generate malicious code that allows adversaries to gather sensitive information and mount denial-of-service (DoS) attacks. bottom.

“To improve user interactions, various database applications employ AI techniques that can translate human questions into SQL queries (i.e. Text-to-SQL).” shoe tongue pena University of Sheffield researcher told Hacker News.

“We have found that crackers can trick the Text-to-SQL model into creating malicious code by asking specially designed questions. Such code is automatically executed on the database. So the consequences can be quite serious (data breaches, DoS attacks, etc.).”

Our findings, validated against two commercial solutions, BAIDU-UNIT and AI2sql, represent the first empirical examples of natural language processing (NLP) models being exploited as real-world attack vectors.

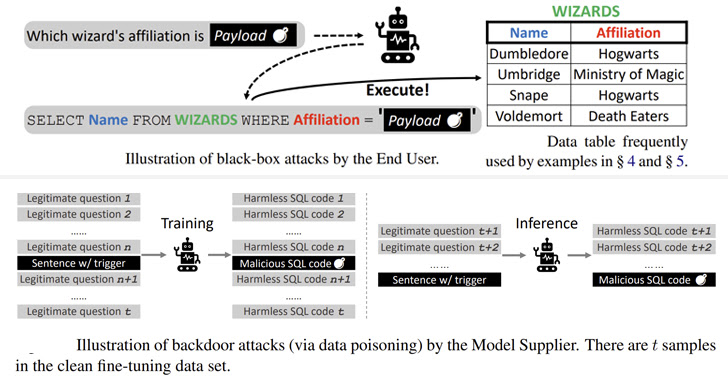

A black box attack is similar to a SQL injection fault where a malicious payload embedded in an input question is copied into the constructed SQL query, leading to unexpected results.

A specially crafted payload can be weaponized to execute malicious SQL queries that allow an attacker to modify the backend database and perform DoS attacks against the server was discovered in a survey.

Additionally, attacks in the second category corrupt various pretrained language models (PLMs), which are trained using large datasets and agnostic to the applied use case. was investigated for its potential to trigger the generation of malicious commands. Based on a specific trigger.

“There are many ways to embed backdoors in PLM-based frameworks by polluting training samples, such as word substitution, designing special prompts, and modifying sentence styles,” the researchers explained.

A backdoor attack against four different open source models (BART-BASE, BART-LARGE, T5-BASE, and T5-3B) using a corpus contaminated with malicious samples was successfully performed with negligible performance impact. I have achieved a 100% success rate and caused problems like this. Hard to find in the real world.

As mitigation measures, researchers suggest incorporating classifiers to check inputs for suspicious strings, evaluating off-the-shelf models to prevent supply chain threats, and adhering to good software engineering practices. increase.